Some of my favorite tweets on #SherpaLPO (the hashtag for Optimization Summit in Atlanta) reflect the stark difference between evidence-based marketing and “song and dance” marketing…

Landed safe and sound in Atlanta, ready to nerd it up tomorrow with fellow website optimizers #SherpaLPO http://ow.ly/57ghH

Getting ready to geek out with @MarkKilens and @mgieva at #SherpaLPO

To use a high school analogy, marketers are often thought of as the popular people – the Student Government president, the captain of the football team (or perhaps curling team for our Canadian friends).

But the 139 marketers listening to Dr. Flint McGlaughlin teach right now in our pre-Optimization Summit Landing Page Optimization Workshop in Atlanta (the next stops of this workshop will be in New York and San Francisco) are not seeking to learn about better ways to add a winning smile or flashy move to their marketing campaigns.

Evidence-based marketers are a little different. They are the chess club president or captain of the academic team (don’t worry, popularity comes when you start marketing based on business intelligence, instead of just intuition, and your campaigns produce results).

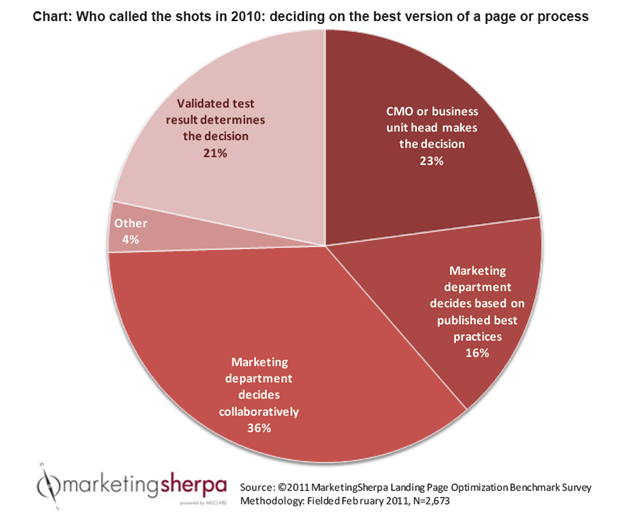

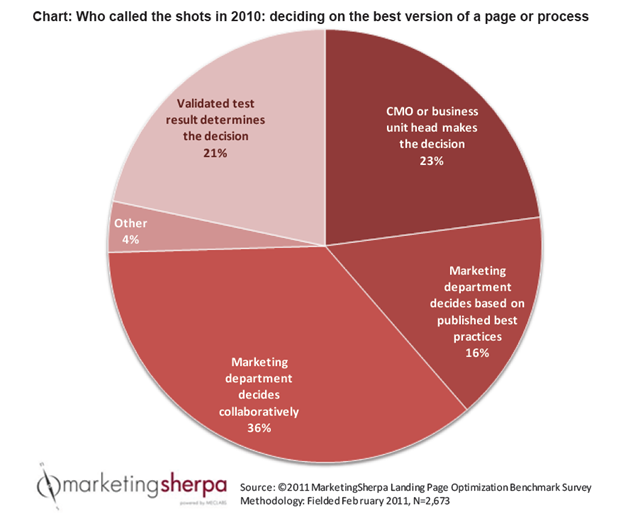

To hit that point home, Dr. McGlaughlin just referenced this chart from the 2011 MarketingSherpa Landing Page Optimization Benchmark Report (every attendee receives a free copy of this $447 book)…

As you can see, only about one-fifth of marketers make decisions based on business intelligence – validated test results. This is particularly interesting to me since I’ll be leading a panel on validity tomorrow at Optimization Summit, an often overlooked and misunderstood topic among those new to testing.

But I especially liked Dr, McGlaughlin’s quote about the 16 percent of marketers who use best practices…

“In optimization, ‘best practices’ is a dangerous concept. I’ve seen companies that say ‘I built this website because Competitor B was doing it this way.’ And the sad part is, sometimes I knew competitor B and knew that they were only building their website that way because that’s what Competitor C was doing . It’s pooled ignorance, it’s not best practices. We don’t need more ‘best practices,’ we need testing.”

Related Resources

Online Testing: 3 takeaways to get the most out of your results

Online Marketing Tests: How could you be so sure?

Marketing Optimization: How to determine the proper sample size

The Fundamentals of Online Testing online training and certification course

Daniel, this is a sad state of affairs which, I believe, trickles down to the smallest solopreneur. That pie would really be skewed on that end, as solopreneurs don’t have marketing heads (LOL – unless it’s their own).

For too many of us, myself included, we tend to rely overmuch on so-called best-practices. I’m so glad you shared Dr. McGlaughlin’s quote. “Pooled ignorance” is a stark contrast to “Wisdom of the crowds”.

Cheers,

Mitch

Great post. How many times have all of us been surprised by the facts? How many times are the facts counter-intuitive? May marketing math geeks be highly valued by CEOs.