ROI is not a result of A/B testing. It is a side effect.

Too many marketers waste time and resources assuming that if they simply create an A/B test, or test different elements on a page, they’ll automatically see results.

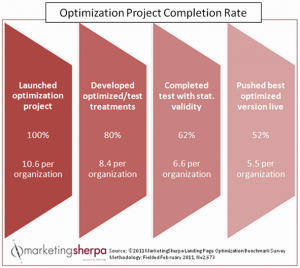

It’s more likely that a marketer will start split testing, and in a matter of months, they will hit a wall where it’s no longer profitable to run said tests. Take, for example, this chart from MarketingSherpa’s 2011 Landing Page Optimization Benchmark Report:

Of the 2,673 marketers surveyed, only 52% of them finished a complete testing cycle.

A little more than one half actually had the time, resources and commitment to push through and complete the project.

And even if they did complete the project, I’d love to see the chart on the completion rate for the second project. There’s probably another sharp drop off in that one.

So the question is, with testing being touted all over the Internet as the silver bullet in achieving a tangible ROI, why aren’t we seeing it?

Surely, if the ROI was so tangible, we’d have no choice but to complete our optimization projects, right?

Why We Aren’t Seeing Results

As marketers, it’s our job to get results. If we don’t, we lose our jobs.

But this kind of pressure skews our thinking. Instead of taking the time to methodically plan and strategize, we run around like headless chickens looking for quick fixes.

And as with any quick fix, we end up with second-rate results.

As Dr. Flint McGlaughlin has pointed out, more valuable than any quick fix is the reason behind customer behavior.

So while most marketers can certainly slap together two treatments and see which one gets a higher conversion rate, the marketer that asks “why” more customers responded to one treatment over the other is gleaning the maximum customer insight.

Once you’ve attained enough customer insight, you can begin to predict your customer’s behavior. And when you can predict your customer’s behavior, it takes less and less time and resources to get the results you want (or most likely didn’t think you could achieve).

This is precisely why the goal of a test is not to get a lift, but rather to get a learning.

The Result of Focusing on Results

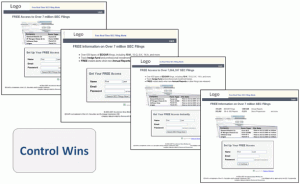

Let me give you a couple of examples from our own library of tests. The first example is the results-first approach. One of our researchers lost sight of gaining the maximum customer insight and simply came up with what he/she thought would be some ways to get quick results. The treatments looked like this:

When the results came in, the control won. The problem is, we don’t know why. Here’s what the researcher had to say in our Test Protocol:

For some reason, the combination of elements on the control outperformed the treatment pages. It could be the headline/graphic combo without the text, the overall simplicity of that version, or anything in between … But what we do know is that the control is best 🙂

If our researcher had taken the time to focus on what he/she was trying to learn about the customer, this test wouldn’t have been fruitless. As it stands, however, it was essentially a waste of time and resources.

The Result of Focusing on Customer Insight

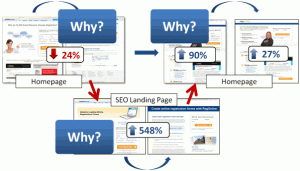

However, when we can use a test to effectively hone in on what makes our customers tick, we can gain the maximum result. Take this test that was featured in Negative Lifts: How a 24% loss produced a 141% increase in conversion, for instance:

By focusing on the maximum customer insight, the marketer, in this case, was able to use his/her initial loss to their advantage. For the initial test, they found out that a process-based approach to conversion (i.e., sign up for your free trial) did not resonate with customers.

For the second test, they moved to a lower-risk page with similar customer traffic and tested a product-based approach to conversion (i.e., this product is valuable and you should try it).

When they discovered this approach worked, it eventually led to a 141% increase on the homepage. Not only that, but they were able to apply that same insight to all of their pages for a huge lift across their entire Marketing-Sales funnel.

6 Steps to A/B Testing for a Learning, not Just a Lift

While it’s great to see examples of what happens when you focus on customer insight instead of instant results, you’re probably wondering if there is a systematic, useful way to run your tests without acting like a headless chicken.

Yes. There is.

In fact, we take this systematic approach every time we run a test (or at least we’re supposed to). And while I can’t give you our entire process, I can give you the basic steps needed for you to accomplish it in your own tests.

Step 1: Gather the customer data you already have

The first step involves gathering everything you already know about how your customer acts on your pages and even off your pages (on your competitor’s pages).

If you’ve already run some A/B tests on your site, great! Use that data if you can. If not, use what you have. Here are a few places you can look:

- Your analytics platform

- Customer reviews

- Social media

- Quick Google searches for your keywords

- Conversations with Sales/Customer Service

Step 2: Determine some of the major problems in your conversion process

Once you’ve gathered all the data you can, it’s time to sift through it and determine where the major problems are in your conversion process.

Of course, this step is much easier said than done. We were having trouble doing this step ourselves a few years back. To help us think through the problems in our conversion process, we developed the patented MarketingExperiments Conversion Sequence heuristic. Since its inception, marketers like you have used it all over the world to identify problems with their pages (example).

Of course, it takes a while to master the ability to identify all of the potential conversion leaks on your pages. If you’d like to get better at that, you can take our paid LPO course.

Step 3: Hypothesize solutions that directly correspond to those problems

Step 3 happens to be my favorite step. It’s the quickest step, and is certainly the easiest to pull off. It requires that you write down a sentence, though, so get your pen out.

Let’s say that by applying the Conversion Sequence heuristic to your existing customer data, you’ve found that most customers are anxious about buying product X from you because they’ve never heard of your company.

Assuming this is the biggest problem you’ve found and is therefore a priority, you might hypothesize that by creating treatments that highlight the credibility of your company, you will be able to get a conversion lift.

Whatever your hypothesis is, write it down. You’ll need it in the next step.

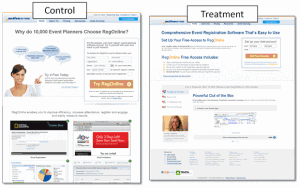

Step 4: Develop treatments that directly test your hypothesis

This is usually where marketers start running around looking for results instead of insight. They sit down with their wire-framing tool and get carried away with cool page designs that may or may not be testing their hypothesis.

Resist the temptation to develop treatments you think will get you results. Stick to the plan.

You need treatments that test your hypothesis and ONLY test your hypothesis.

Please understand this does not necessarily mean you can only do single-factor split tests. It does mean that whatever variable you change must be because you think it will help to solve the problem and test the hypothesis you originally developed.

This is why in the example above we were able to change so many variables and still test our hypothesis. Our hypothesis in the first test was that by focusing on the process-level value we would be able to get a lift. Every change we made to the page was laser-focused on creating process-level value rather than product-level value.

And this is precisely why, when the treatment lost by 24%, we were able to completely rule that approach out as a means of getting results.

That knowledge proved invaluable for the follow-up tests and ultimately resulted in impressive across-site conversion lifts.

Step 5: Run a valid test

This seems to be the hardest part for many marketers. Besides calculating for statistical significance, there are several other validity threats to consider that will undermine your test results.

To truly understand how to run a valid test, I’ll shamelessly promote our online testing course. As far as I know, it’s the only serious online testing course available. And if I’m wrong about that, it’s certainly the best. And, if I’m wrong about that, at least you know where it is (unlike that other, better online testing course).

Step 6: Gather the customer data you’ve just generated (rinse and repeat)

If you’ve stuck around for this long, you now have some clean and useable data for gazing into the mind of your customer. Starting to sound familiar?

That’s because it’s time to repeat the process until you see real results.

While you may not get a lift on your first test, at least you’ll know why. And that’s one step ahead of your competition on the road to real results with A/B testing.

Related Resources:

Optimization Summit 2012 — June 11–14, 2012 in Denver

Website Optimization: Testing program leads to 638% increase in new accounts

Marketing Optimization: You can’t find the true answer without the right question

Website Optimization: Landing page test leads to 548% increase in conversion

Email Marketing Optimization: How you can create a testing environment to improve your email results